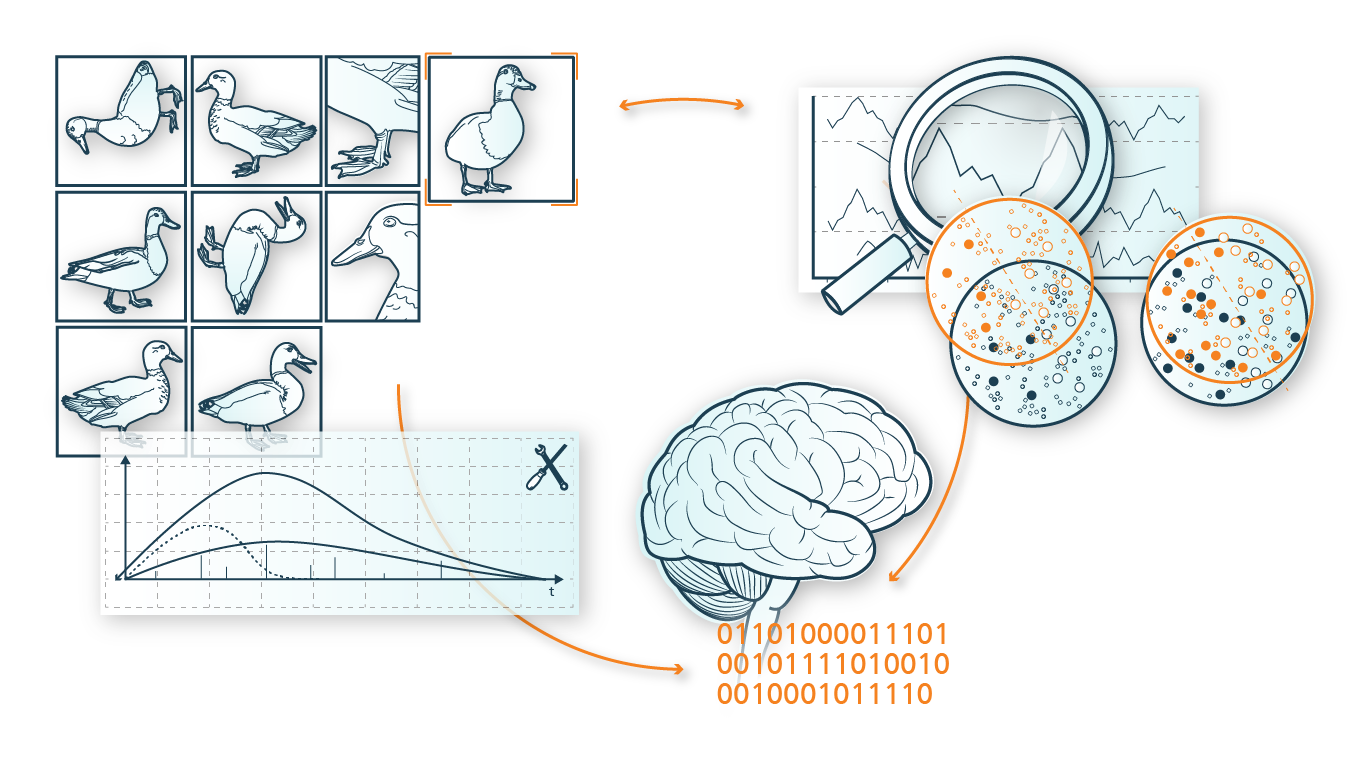

AutoML is one of the core competencies of the project group at the Munich site, which was set up as part of the ADA Lovelace Center and has already been able to complete a number of industrial projects dealing specifically with the topic of AutoML. Often, this involves bridging the gap between the very abstract research field of "AutoML" and an application in an industrial context that must generate added value in the end. This is the classic case of "reality does not match research". For challenges involving unknown costs in classification, multimodal data, imbalanced datasets, or AutoML for sensor data, new approaches with AutoML systems tailored specifically to the application can help.

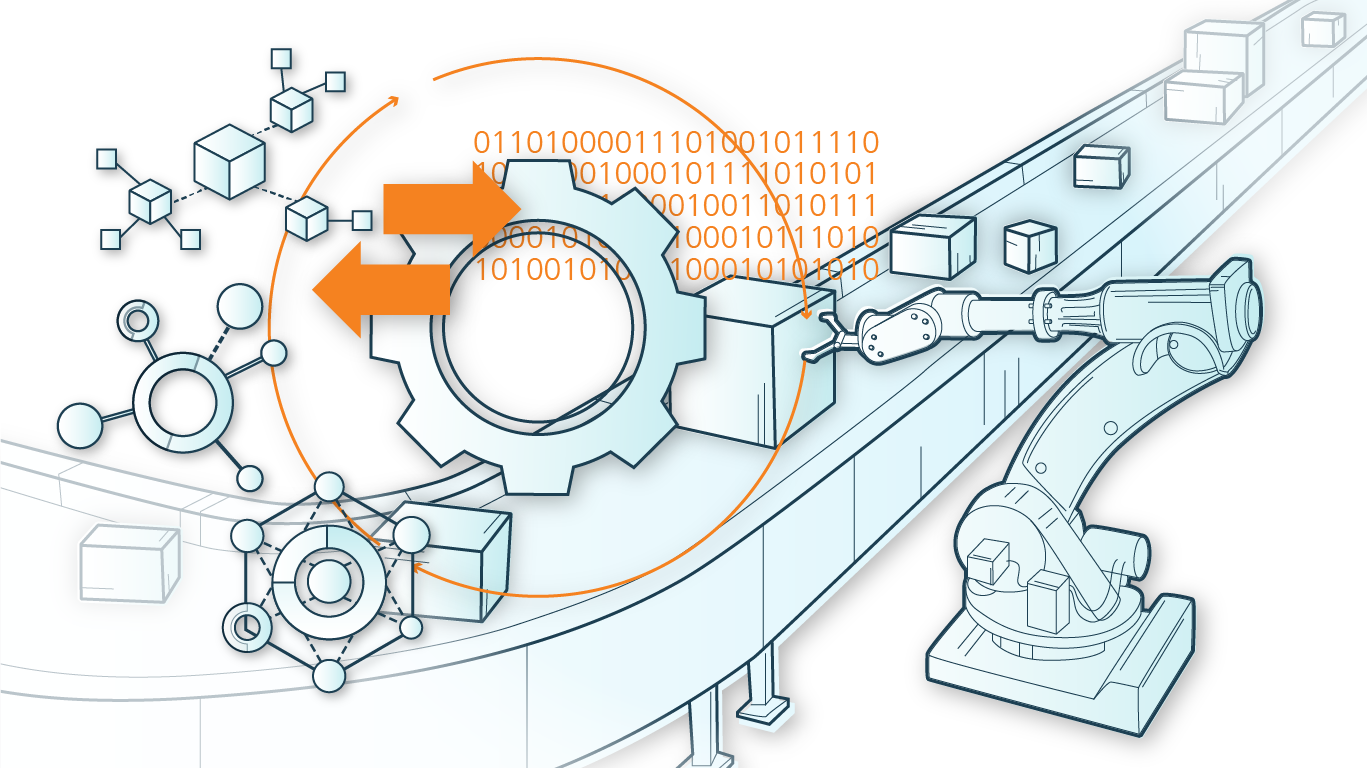

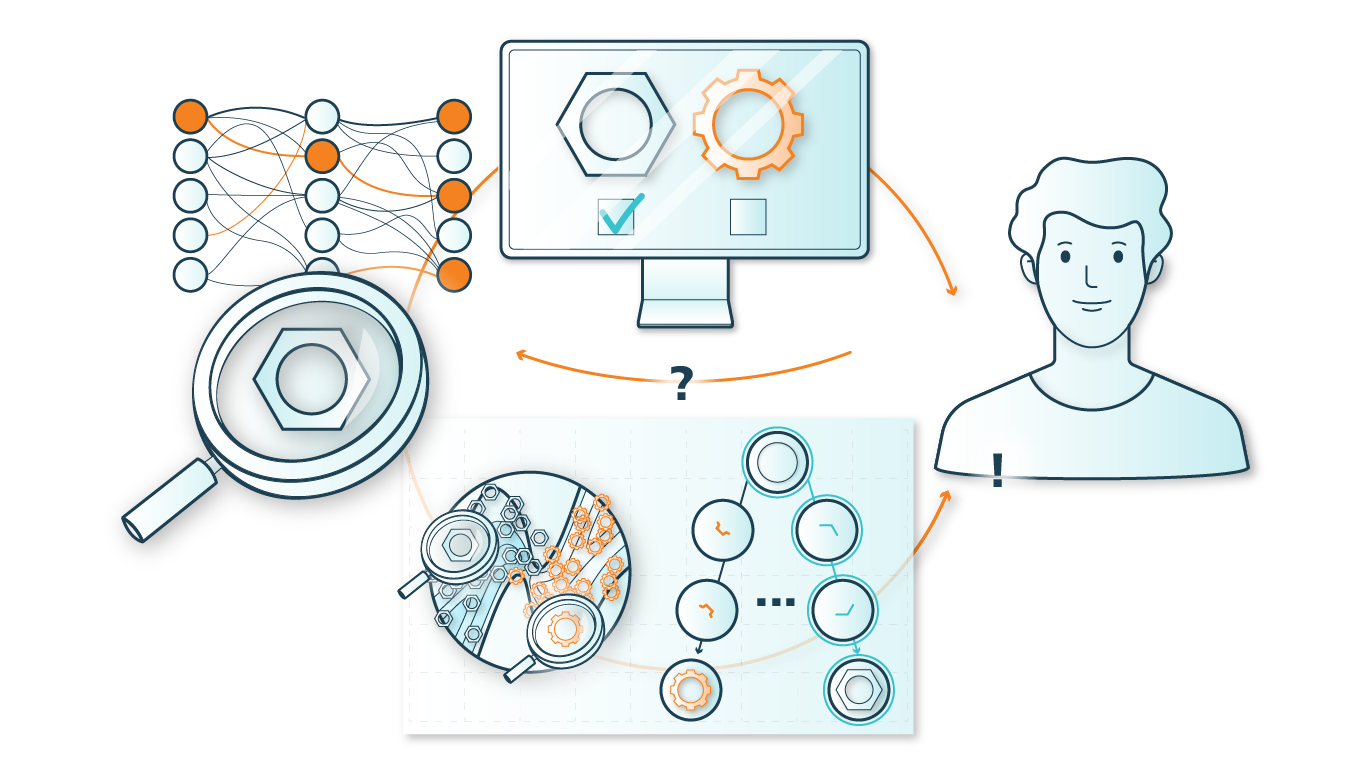

The first step for successful AutoML is the selection of a suitable search space, meaning the decision which methods, models etc. can be tested. This search space is then screened for an optimal ML pipeline using a suitable optimization method. Model-based optimization (MBO) methods, most prominently Bayesian Optimization, are often a good choice and have been successfully used in past projects, e.g. for the design of an AutoML system for quality assurance in industrial manufacturing. A popular alternative that handles hierarchical and complex search spaces well and scales better than MBO in certain cases are Evolutionary Algorithms.

Furthermore AutoML perfectly harmonizes with the other competence pillars of the project group: Explainable Learning and Few-Labels Learning. For example, Few-Labels Learning methods are also configurable and must be selected differently depending on the application. This can be automated with the support of suitable AutoML methods. Explainability, however, is often a weak point for AutoML systems, if the optimal model is chosen only on the basis of performance. The results of AutoML are then often black-box models that deliver very good results, but are no longer interpretable. Multidimensional optimization in the context of AutoML can combine two different metrics for evaluating a model - such as performance and interpretability - in one approach. Another approach is meta-modeling, where a black-box AutoML system is made explainable by a meta-model.

AutoML in Predictive Maintenance: ALONE - Self-learning Adaptive Logistic Networks

AutoML can be used in settings where very similar tasks with slightly different circumstances occur multiple times. One example is predictive maintenance or machine health monitoring. Machine Learning can predict the probability of failure of an (expensive) machine or the remaining time until failure, allowing optimized and predictable maintenance at minimal cost. Certain ML models and preprocessing methods are very promising for this kind of ML tasks, but in practice it is not reasonable to manually select an optimal ML model for each kind of machine and environment. AutoML can help in this case and generate an optimal ML pipeline from all relevant methods and models for each specific application.

More information about the application "Self-optimization in adaptive logistics networks"

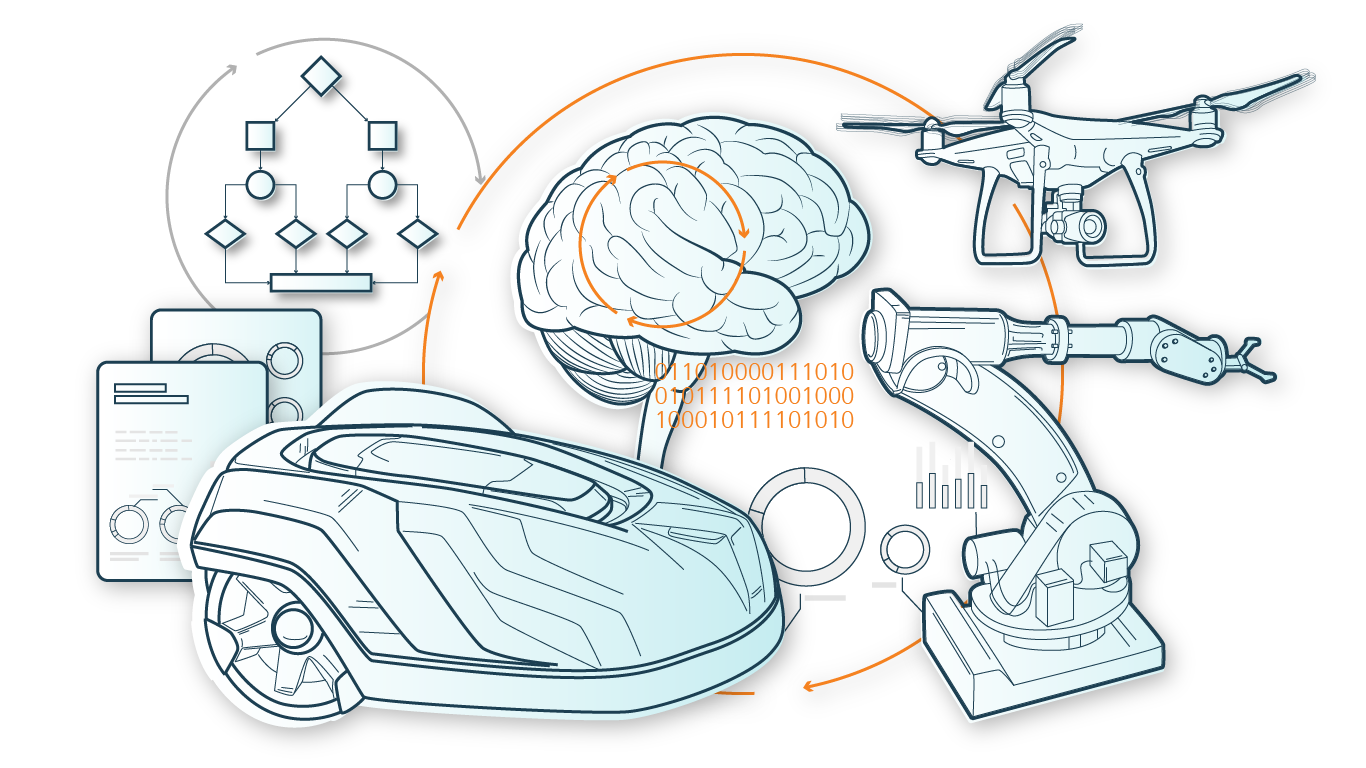

AutoML and Meta-Learning: AI Frameworks for Autonomous Systems

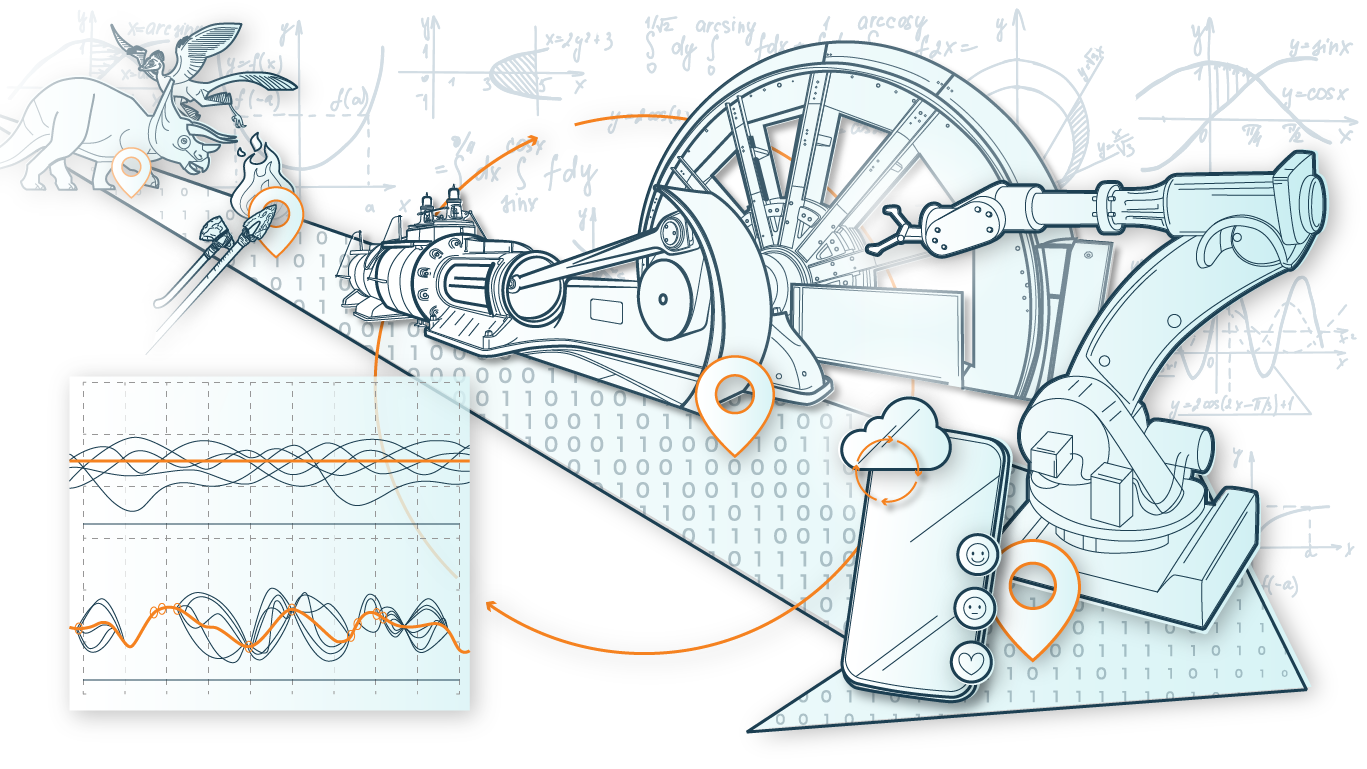

Application "AI Framework for Autonomous Systems" is focused on reinforcement learning methods, whose performance is often extremely dependent on certain hyperparameters. At the same time, reinforcement learning algorithms are extremely expensive in many cases. Therefore, efficient Hyperparameter Tuning for reinforcement learning (LINK) was investigated in A05; furthermore research was done on meta-learning for this setting. Meta-learning tries to use the information already learned from previous tasks in a more useful way for AutoML (or in this case hyperparameter tuning) on new tasks. Depending on the application, meta-learning can be relevant for finding an optimal pipeline ("warmstarting") or even help to apply existing fixed architectures to new tasks ("transfer learning"). Meta-learning can also be a promising approach for recurring tasks that are similar - such as in the Self-learning Adaptive Logistic Networks application.

AutoML as part of almost every application

In the ADA Lovelace Center, questions from the applications "Intelligent Power Electronics" and "Monitoring and fault diagnosis of industrial wireless systems", which also belong to the research field of Automated Learning, were discussed for the first time. As a result, research was done there on automatic stability determination of DC networks as well as radio networks by ML methods.

Hyperparameter optimization, feature engineering, model selection etc. are a part of almost every application of Machine Learning. The competences of the pillar AutoML are therefore also used in many other projects beyond the ADA Lovelace Center - for example in the project "Demand Forecast as a Service (dFASSI)".

AutoML for the generation of AI models with minimal energy demand (AutoML ASIC)

Integrating energy demand prediction into multicriteria AutoML methods allows us to automatically generate AI processing chains for embedded hardware with minimal energy demand. As in many industrial ML applications, two conflicting objectives are relevant to users: Models with small energy requirements are often less complex in comparison and therefore show weaker performance. Therefore, developers are offered several (pareto-optimal) solution combinations in a multi-criteria AutoML solution. This way, an optimal trade-off between prediction accuracy (performance) and later energy demand, adapted to the own hardware configuration, can be chosen. In addition to evolutionary algorithms and Bayesian optimization, methods from the field of reinforcement learning (e.g. augmented random search) are also used here.

Learn more about TinyML